Last week at the NZPI planning conference I ran a small experiment during my presentation.

The session explored three scenarios across planning and possible ways digital technology might change planning practice by 2030:

| Topic | Scenario | Question |

| Policy | Using behavioural data to understand how public spaces are used | Q1: Should policy planners use 12 months of camera and sensor monitoring in public open space to understand how people use the space and to tailor engagement? |

| Consents | Structuring planning assessments as data rather than long narrative reports | Q2: Should consent planners ditch report writing in Word and instead enter all their reporting in data fields? |

| CME | Continuous monitoring of consent conditions using sensors and dashboards | Q3: Should CME planners rely on most consent holders to self-monitor and use technology to report to council and affected parties? |

For each scenario I asked the audience to vote twice.

First they voted based only on the initial question.

Then they voted again after I briefly discussed some of the technologies involved and the ethical implications.

Two different voting methods were used during the session, with audience members asked to stick with one method for all their votes:

- Slido polling, where participants could choose agree, disagree or unsure

- A show of hands in the room, where only agreement was counted

These methods captured slightly different results.

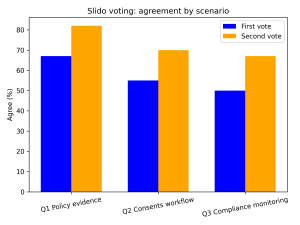

Slido Results

In the Slido poll, there was a good level of baseline agreement for these scenarios in the first vote. This agreement increased across all three scenarios after participants reflected on how the technology might work and voted again.

Slido polling (percentage of respondents)

| Scenario | Agree first | Agree second | Change |

| Q1 Open space behavioural data | 67% | 82% | +15 pp |

| Q2 Structured digital reasoning | 55% | 70% | +15 pp |

| Q3 Continuous compliance monitoring | 50% | 67% | +17 pp |

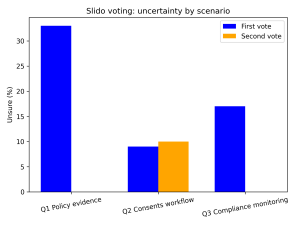

Another interesting pattern was the reduction in uncertainty.

In two of the three scenarios, the proportion of participants selecting unsure dropped to zero once the scenarios were explored further.

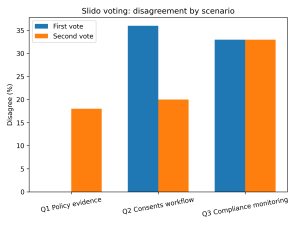

This suggests the discussion helped participants move from initial hesitation toward clearer positions (whether that was agree or disagree). The graph below shows the change in the second vote for those who selected disagree, with it increasing from 0 for Q1, reducing for Q2 and staying the same for Q3.

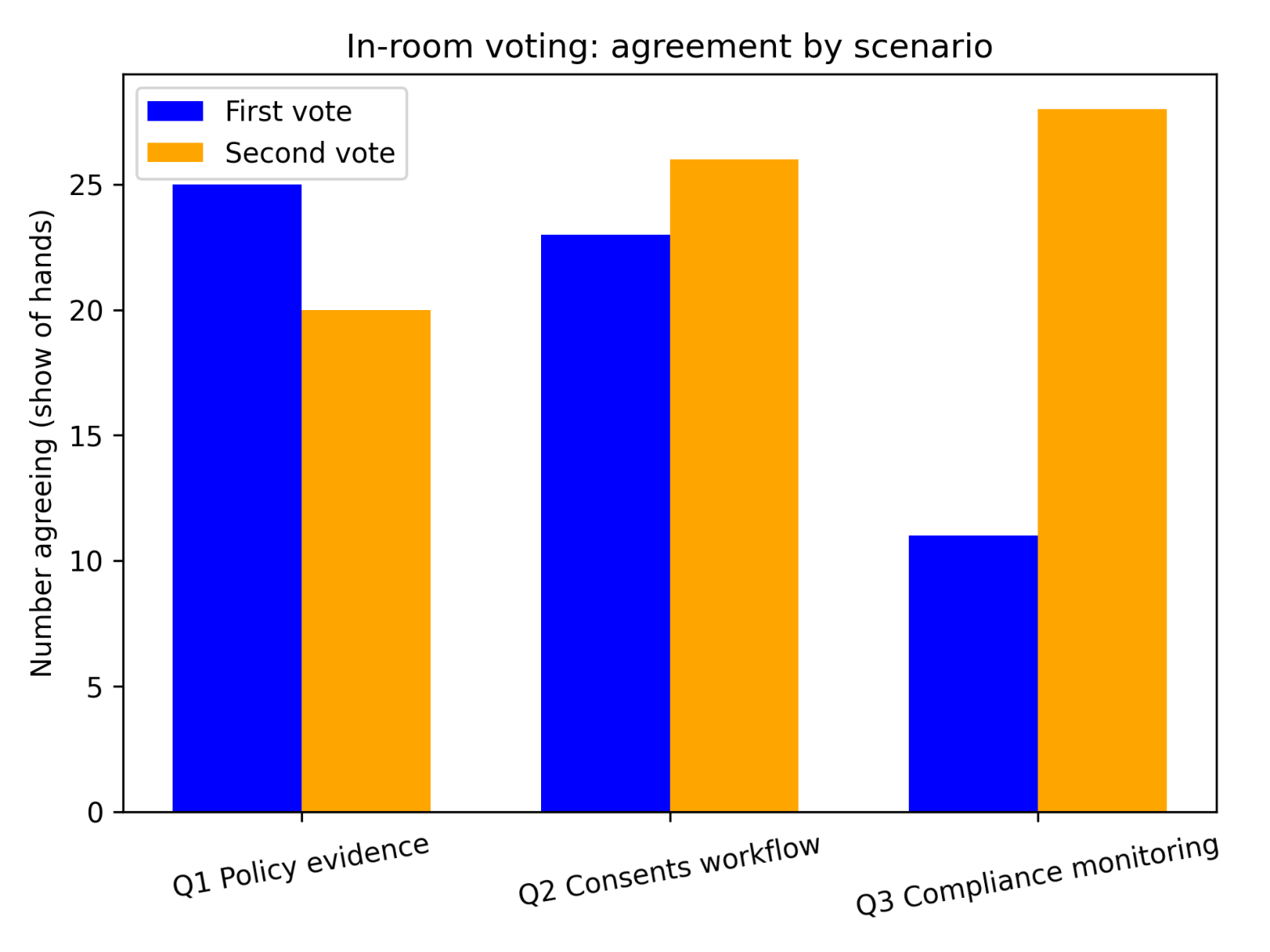

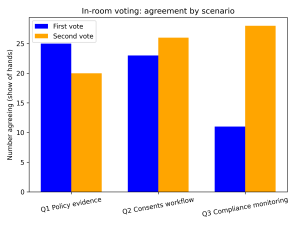

In-room voting

The show-of-hands voting told a slightly different story.

Initial agreement was positive and increased further for the second vote in two of the three scenarios, but decreased slightly in the first scenario:

In Room Voting (show of hands)

| Scenario | Agree first | Agree second | Change | |

| Q1: Open space behavioural data | 25 | 20 | -5 | |

| Q2: Structured digital reasoning | 23 | 26 | +3 | |

| Q3: Continuous compliance monitoring | 11 | 28 | +17 |

The strongest shift occurred in the compliance monitoring example, where agreement in the room more than doubled after participants considered how the monitoring data, transparency dashboards and AI triage might operate.

What might this suggest?

This was not a formal study – the sample size was small and such voting is inherently informal. As a presentation in the digital stream of the conference, the audience were more likely to be interested in digital (and therefore have existing opinions) than the average planner. But the exercise highlighted a few interesting patterns.

First, there was healthy support for many of the scenarios in the first vote, perhaps indicating the digital interest of attendees of this session, so it would be worth repeating with a more varied cross section of planners. If this support was to be wide spread in the profession it indicates an existing willingness to move to more modern methods which should be capitalised on.

Second, there is some indication that reactions may vary depending on where the technology sits within the planning system. Technology supporting evidence gathering for policy seemed relatively easy for participants to accept. Technology that might reshape consent reporting processes generated more caution. And technology aimed at solving operational challenges such as compliance monitoring generated the strongest shift in support once the practical benefits were considered.

Third, the Slido poll which allowed participants to respond with unsure, showed that uncertainty reduced significantly once participants reflected on the scenarios in more detail.

Taken together, the results suggest that some hesitation around digital approaches in planning may reflect uncertainty or unfamiliarity rather than firm opposition.

A small experiment in professional reflection sparking a big conversation for our profession

For me, the most interesting takeaway was how quickly discussion about practical examples can move the conversation. I only presented 2-3 minutes of information on each scenario talking about technologies and ethical aspects. We still saw noticeable shifts in opinions.

Planners are not necessarily resistant to technology. But they want to understand how it fits with professional judgement, ethics, and the realities of practice.

Exploring possible futures – even briefly – can help make those questions more concrete.

The most striking change wasn’t just the increase in agreement, but the disappearance of uncertainty. Once participants briefly explored how the technologies might work, most people felt able to take a clearer position. We need to debate the best forms of best practice as we move forward with adopting more powerful technology. There is a role for all planners in that conversation whether they agree or disagree. The debate surfaces the real practice issues, ethical conundrums and enables us to chart a way forward for all planners.

Let’s continue to have these important conversations.

Today’s technology decisions shape tomorrow’s planning reality. Let’s be brave and deliberate about creating that future.

Thank you to all those that took part in this session, and to Chloe Smith from Objective for operating the Slido poll and counting the in-room votes.